Apache Kafka is an open-source distributed event streaming platform composed of servers and clients that communicate through the TCP protocol.

LinkedIn initially created Kafka as a high-performance messaging system and open sourced it late 2010s.

After many years of improvements, nowadays Apache Kafka is referred to be a distributed commit log system, or a distributed streaming platform.

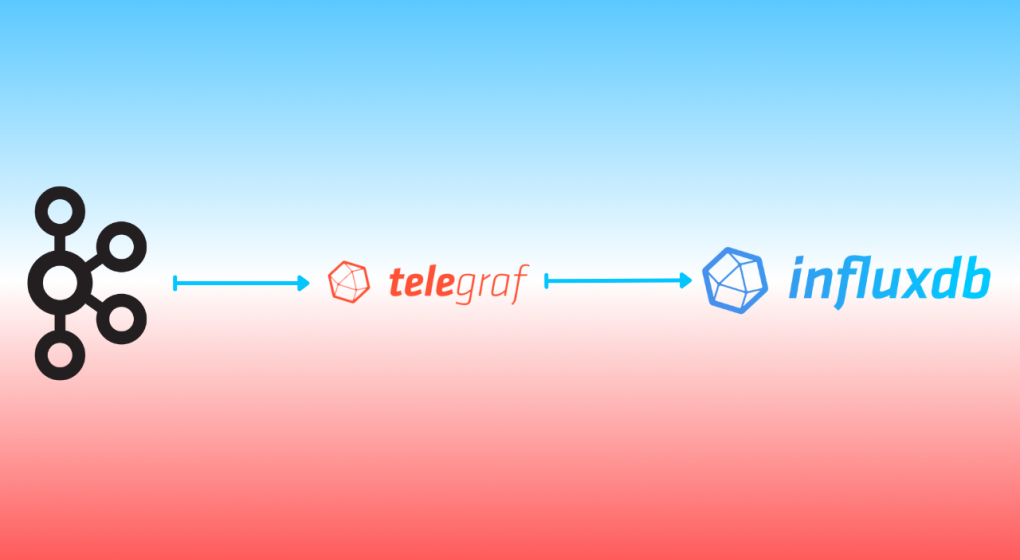

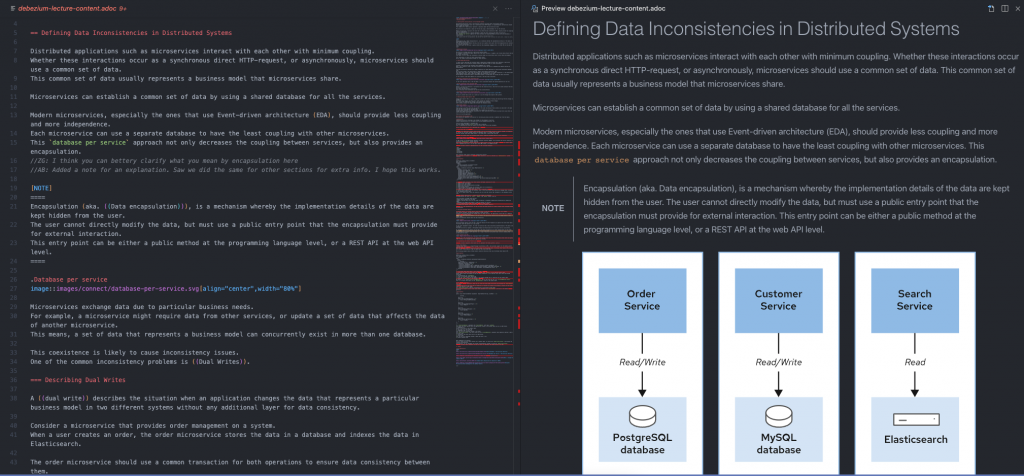

Apache Kafka is a very important project in the cloud-native era, because the current distributed architecture of the services (or microservices) might require an event-based system that handles data in an event-driven way and Kafka plays an important role in this.

You can create an event-driven architecture with Kafka where all microservices communicate with events through Kafka, aggregate your logs in Kafka and leverage its durability, send your website tracking data, use it for data integration by using Kafka Connect, and more importantly you can create real-time stream processing pipelines with it.

These use-cases are very common and popular among companies nowadays, because from small startups to big enterprises, they need to keep up with the new technology era of cloud, cloud-native and real-time systems for providing better products or services to their customers.

Data is one of the most important concepts for these companies and because of this, they leverage Apache Kafka capabilities by using it for many use-cases.

However, when you work with data, you must think about its security because you might be working with some financial data you might want to isolate or simply user information that you should keep private.

This is both important for the sake of the company and the users or service consumers of the company.

So since it is all about data, and its being secure, you should secure the parts of your system that touch the data, including your Apache Kafka.

Apache Kafka provides many ways to make its system more secure.

It provides SSL encryption that you can both configure between your Apache Kafka brokers and between the server and the clients.

There are also some authentication and authorization (auth/auth) capabilities that Kafka provides.

Following is the compact list of auth/auth components that Apache Kafka 3.3.1 provides currently.

At the time of this writing, Kafka 3.3.1 was the latest release.

- Authentication

- Secure Sockets Layer (SSL)

- Simple Authentication and Security Layer (SASL) or SASL-SSL

- Kerberos

- PLAIN

- SCRAM-SHA-256/SCRAM-SHA-512

- OAUTHBEARER

- Authorization

As you can see, Kafka has a rich list of authentication options.

In this article, you will learn how to setup a SSL connection to encrypt the client-server communication and setup a SASL-SSL authentication with the SCRAM-SHA-512 mechanism.

What is SSL Encryption?

SSL is a protocol that enables encrypted communication between network components.

This SSL encryption prevents data to be sniffed by intercepting the network and reading the sensitive data via a Man-in-the-middle (MIM) attack or similar.

Before having SSL (and Transport Layer Security (TLS)) in all the web pages on internet, internet providers used SSL for their web pages that contains sensitive data transfer such as payment pages, carts, etc.

TLS is a successor of the SSL protocol and it uses digital documents called certificates, which the communicating components use for encrypted connection validation.

A certificate includes a key pair, a public key and a private key and they are used for different purposes.

The public key allows a session (i.e initiating a web browser) to be communicated through TLS and HTTPS protocols.

The private key, on the other hand, should be kept secure on the server side (i.e the server that the web browser is being served), and it is generally used to sign the requested documents (i.e web pages).

A successful SSL communication between the client and the server is called an “SSL handshake”.

The following image shows how SSL works simply.

In the following tutorial, you will create a self-signed public and private key with the help of the instructions.

For more information on the certificates, private and public keys, certificate authorities and many more, visit this web page as a starting point.

What is Simple Authentication and Security Layer (SASL)?

SASL is a data security framework for authentication.

It acts like an adaptor inerface between authentication mechanisms and application protocols such as TLS/SSL.

This makes applications or middlewares (such as Apache Kafka), easily adopt SASL authentication with different protocols.

SASL supports many mechanisms such as PLAIN, CRAM-MD5, DIGEST-MD5, SCRAM, and Kerberos via GSSAPI, OAUTHBEARER and OAUTH10A.

Apache Kafka (currently the 3.3.1 version), supports Kerberos, PLAIN, SCRAM-SHA-256/SCRAM-SHA-512, and OAUTHBEARER for SASL authentication.

The PLAIN mechanism is fairly easier to setup and it only requires a simple username and a password to setup.

The SCRAM mechanism, as well, requires a username and a password setup but provides a password with challenge through its encryption logic.

Thus, SCRAM provides a better security than PLAIN mechanism.

The GSSAPI/Kerberos on the other hand, provides the best security along with OAUTHBEARER, however these mechanisms are relatively harder to implement.

Each of these protocols require different server and client configurations with different efforts on Apache Kafka.

Other than these mechanisms, you can enable SASL along with SSL in Kafka to gain extra security.

This is called SASL-SSL protocol in a Kafka system.

In the following tutorial, you will enable SSL first, then you will enable SASL on it using the SCRAM-SHA-512 mechanism.

Prerequisites

You’ll need the following prerequisites for this tutorial:

- A Linux environment with

wget and openssl installed.

- Java SDK 11 (be sure that the

keytool command is installed)

- The

kafka-sasl-ssl-demo repository.

Clone this repository to use the ready-to-use Kafka start/stop shell scripts and Kafka configurations.

You will use the cloned repository as your main workspace directory throughout this tutorial.

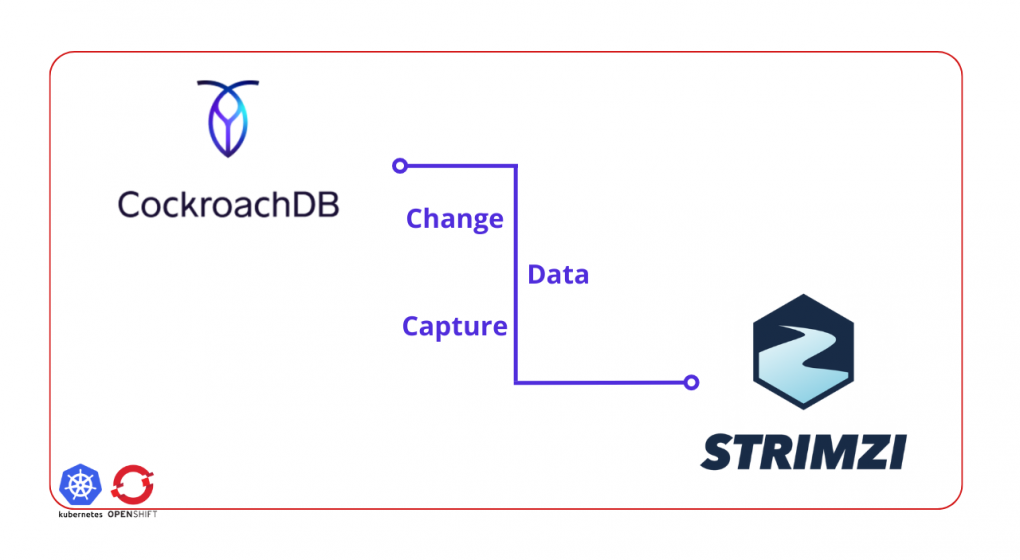

CreditRiskCom Inc.’s Need for Kafka Security

CreditRiskCom Inc. is a credit risk analyzing company, which works with the banks and government systems.

They are integrating with a government system that sends the credit risk requests to their Kafka topic to be consumed by their applications.

Their applications creates the requested report and serves it to the government system usage.

Because the credit risk request data is sensitive and Apache Kafka plays a big role in the system, they want to secure it.

They hire you as a Kafka consultant to help them with setting up a secure Kafka cluster with SSL and SASL.

The following diagram shows the required secure architecture for Kafka.

Running the Apache Kafka Cluster

Navigate to your cloned repository’s main directory kafka-sasl-ssl-demo.

In this directory, download the Apache Kafka 3.3.1 version by using the following command.

wget https://downloads.apache.org/kafka/3.3.1/kafka_2.13-3.3.1.tgz

Extract the tgz file and rename it to kafka by using the following command.

tar -xzf kafka_2.13-3.3.1.tgz && mv kafka_2.13-3.3.1 kafka

Run the start script that is located in bin directory under kafka-sasl-ssl-demo.

Note that the following script also makes the start-cluster.sh file executable.

chmod +x ./bin/start-cluster.sh && ./bin/start-cluster.sh

Preceding command runs the Zookeeper and Apacke Kafka instances that you’ve downloaded.

The script uses the configurations that are located under resources/config.

Create a Kafka topic called credit-risk-request:

./kafka/bin/kafka-topics.sh \

--bootstrap-server localhost:9092 \

--create \

--topic credit-risk-request \

--replication-factor 1 --partitions 1

The output should be as follows.

Created topic credit-risk-request.

This output verifies that the Kafka cluster is working without any issues.

On a new terminal window, start the Kafka console producer and send a few sample records.

./kafka/bin/kafka-console-producer.sh \

--broker-list localhost:9093 \

--topic credit-risk-request \

--property parse.key=true \

--property key.separator=":"

On the opened input screen, enter the records one by one in the following format, which indicates the ID of the credit risk record, name and surname of the person, and risk level.

Notice that you use a key parser as : to set the ID as the key.

Before sending the records, leave the producer window open and open another terminal window.

Run the console consumer by using the following command.

./kafka/bin/kafka-console-consumer.sh \

--bootstrap-server localhost:9093 \

--topic credit-risk-request \

--property print.key=true \

--property key.separator=":"

Switch back to your terminal where your console producer works and enter these values:

34586:Kaiser,Soze,HIGH

87612:Walter,White,HIGH

34871:Takeshi,Kovacs,MEDIUM

The output of the console consumer on the other terminal window should be as follows.

34586:Kaiser,Soze,HIGH

87612:Walter,White,HIGH

34871:Takeshi,Kovacs,MEDIUM

Stop the console consumer and producer by pressing CTRL+C on your keyboard and keep the each terminal window open for further usage in this tutorial.

Setting Up the SSL Encryption

Regarding CreditRiskCom Inc’s request, you must secure the Kafka cluster access with SSL.

To secure the Kafka cluster, you must set up the SSL encryption on the Kafka server first, then you must configure the clients.

Configuring the Kafka Server to use SSL Encryption

Navigate to the resources/config directory under kafka-sasl-ssl-demo and run the following command to create the certificate authority (CA) and its private key.

openssl req -new -newkey rsa:4096 -days 365 -x509 -subj "/CN=Kafka-Security-CA" -keyout ca-key -out ca-cert -nodes

Run the following command to set the server password to an environment variable.

You will use this variable throughout this article.

export SRVPASS=serverpass

Run the keytool command to generate a JKS keystore called kafka.server.keystore.jks.

For more information about keytool visit this documentation webpage.

keytool -genkey -keystore kafka.server.keystore.jks -validity 365 -storepass $SRVPASS -keypass $SRVPASS -dname "CN=localhost" -storetype pkcs12

To sign the certificate, you should create a certificate request file to be signed by the CA.

keytool -keystore kafka.server.keystore.jks -certreq -file cert-sign-request -storepass $SRVPASS -keypass $SRVPASS

Sign the certificate request file.

A file called cert-signed should be created.

openssl x509 -req -CA ca-cert -CAkey ca-key -in cert-sign-request -out cert-signed -days 365 -CAcreateserial -passin pass:$SRVPASS

Trust the CA by creating a truststore file and importing the ca-cert into it.

keytool -keystore kafka.server.truststore.jks -alias CARoot -import -file ca-cert -storepass $SRVPASS -keypass $SRVPASS -noprompt

Import the CA and the signed server certificate into the keystore.

keytool -keystore kafka.server.keystore.jks -alias CARoot -import -file ca-cert -storepass $SRVPASS -keypass $SRVPASS -noprompt &&

keytool -keystore kafka.server.keystore.jks -import -file cert-signed -storepass $SRVPASS -keypass $SRVPASS -noprompt

You can use the created keystore and truststore in your Kafka server’s configuration.

Open the server.properties file with an editor of your choice and add the following configuration.

ssl.keystore.location=./resources/config/kafka.server.keystore.jks

ssl.keystore.password=serverpass

ssl.key.password=serverpass

ssl.truststore.location=./resources/config/kafka.server.truststore.jks

ssl.truststore.password=serverpass

Notice that you’ve used the same password you used for creating the store files.

Setting the preceding configuration is not enough for enabling the server to use SSL.

You must define the SSL port in listeners and advertised listeners.

The updated properties should be as follows:

...configuration omitted...

listeners=PLAINTEXT://localhost:9092,SSL://localhost:9093

advertised.listeners=PLAINTEXT://localhost:9092,SSL://localhost:9093

...configuration omitted...

Configuring the Client to use SSL Encryption

Run the following command to set the client password to an environment variable.

You will use this variable to create a truststore for the client.

export CLIPASS=clientpass

Run the following command to create a truststore for the client by importing the CA certificate.

keytool -keystore kafka.client.truststore.jks -alias CARoot -import -file ca-cert -storepass $CLIPASS -keypass $CLIPASS -noprompt

Create a file called client.properties in the resources/config directory with the following content.

security.protocol=SSL

ssl.truststore.location=./solutions/resources/config/kafka.client.truststore.jks

ssl.truststore.password=clientpass

ssl.endpoint.identification.algorithm=

You should set the ssl.endpoint.identification.algorithm as empty to prevent the SSL mechanism to skip the host name validation.

Verifying the SSL Encryption

To verify the SSL Encryption configuration, you must restart the Kafka server.

Run the following command to restart the server.

./bin/stop-cluster.sh && ./bin/start-cluster.sh

Run the console consumer on your consumer terminal window you’ve used before.

./kafka/bin/kafka-console-consumer.sh \

--bootstrap-server localhost:9093 \

--topic credit-risk-request \

--property print.key=true \

--property key.separator=":"

You should see some errors because the consumer cannot access the server without the SSL configuration.

Add the --consumer.config flag with the value ./resources/config/client.properties, which points to the client properties you’ve set.

./kafka/bin/kafka-console-consumer.sh \

--bootstrap-server localhost:9093 \

--topic credit-risk-request \

--property print.key=true \

--property key.separator=":" \

--consumer.config ./resources/config/client.properties

You should see no errors and messages consumed but because you haven’t sent new messages to the credit-risk-request topic.

Run the console producer on the producer terminal window.

Use the same ./resources/config/client.properties configuration file.

./kafka/bin/kafka-console-producer.sh \

--broker-list localhost:9093 \

--topic credit-risk-request \

--property parse.key=true \

--property key.separator=":" \

--producer.config ./resources/config/client.properties

Enter the following sample credit risk record values one by one on the producer input screen:

99612:Katniss,Everdeen,LOW

37786:Gregory,House,MEDIUM

You should see the same records consumed on the consumer terminal window.

Stop the console consumer and producer by pressing CTRL+C on your keyboard and keep the each terminal window open for further usage in this tutorial.

Setting Up the SASL-SSL Authentication

Setting up the SSL is the first step of setting up a SASL_SSL authentication.

To enable SASL_SSL, you must add more configuration on the server and the clients.

Configuring the Kafka Server to use SASL-SSL Authentication

Create a file called jaas.conf in the kafka/config directory with the following content.

KafkaServer {

org.apache.kafka.common.security.scram.ScramLoginModule required

username="kafkadmin"

password="adminpassword";

};

In the server.properties file add the following configuration to enable SCRAM-SHA-512 authentication mechanism.

sasl.enabled.mechanisms=SCRAM-SHA-512

security.protocol=SASL_SSL

security.inter.broker.protocol=PLAINTEXT

Notice that you keep the security.inter.broker.protocol configuration value as PLAINTEXT, because the inter broker communication security is out of scope of this tutorial.

Update the listeners and advertised listeners to use the SASL_SSL.

...configuration omitted...

listeners=PLAINTEXT://localhost:9092,SASL_SSL://localhost:9093

advertised.listeners=PLAINTEXT://localhost:9092,SASL_SSL://localhost:9093

...configuration omitted...

Run the following command to set the KAFKA_OPTS environment variable to use the jaas.conf file you’ve created.

Don’t forget to change the _YOUR_FULL_PATH_TO_THE_DEMO_FOLDER_ with your full path to the kafka-sasl-ssl-demo directory.

export KAFKA_OPTS=-Djava.security.auth.login.config=/_YOUR_FULL_PATH_TO_THE_DEMO_FOLDER_/kafka/config/jaas.conf

Configuring the Client to use SASL-SSL Authentication

Open the client.properties file, change the security protocol to SASL_SSL and add the following configuration.

...configuration omitted...

sasl.mechanism=SCRAM-SHA-512

sasl.jaas.config=org.apache.kafka.common.security.scram.ScramLoginModule required \

username="kafkadmin" \

password="adminpassword";

...configuration omitted...

Verifying the SASL-SSL Authentication

To verify the SSL Encryption configuration, you must restart the Kafka server.

Run the following command to restart the server.

./bin/stop-cluster.sh && ./bin/start-cluster.sh

Run the console consumer on your consumer terminal window you’ve used before.

./kafka/bin/kafka-console-consumer.sh \

--bootstrap-server localhost:9093 \

--topic credit-risk-request \

--property print.key=true \

--property key.separator=":" \

--consumer.config ./resources/config/client.properties

Run the console producer on the producer terminal window.

./kafka/bin/kafka-console-producer.sh \

--broker-list localhost:9093 \

--topic credit-risk-request \

--property parse.key=true \

--property key.separator=":" \

--producer.config ./resources/config/client.properties

Enter the following sample credit risk record values one by one on the producer input screen:

30766:Leia,SkyWalker,LOW

You should see the same records consumed on the consumer terminal window.

Optionally, you can change the username or password in the client.properties file to test the SASL_SSL mechanism with wrong credentials.

Conclusion

Congratulations!

You’ve successfully implemented SASL-SSL authentication using SCRAM-SHA512 mechanism on an Apache Kafka cluster.

In this article you’ve learned what SSL, TLS and SASL are and how to setup an SSL connection to encrypt the client-server communication on Apache Kafka.

You’ve learned to configure a SASL-SSL authentication with the SCRAM-SHA-512 mechanism on an Apache Kafka cluster.

You can find the resources of the solutions for this tutorial in this directory of the same repository.