Integrating Apache Kafka with InfluxDB

We are in the cloud-native era. Applications or services count is increasing day by day as the application structures transform into microservices or serverless. The data that is generated by these applications or services keep growing and processing these data in a real-time manner becomes more important.

This processing can either be a real-time aggregation or a calculation whose output is a measurement or a metric. When it comes to metrics or measurements, they have to be monitored because in the cloud-native era, you have to be fail-fast. This means the sooner you get the right measurements or metric outputs, the sooner you can react and solve the issues or do the relevant changes in the system. This aligns with the idea that is stated in the book Accelerate: The Science of Lean Software and DevOps, which is initially created as a state of DevOps report, saying “Changes both rapidly and reliably is correlated to organizational success.”

A change in a system, can be captured to be observed in many ways. The most popular one, especially in a cloud-native environment, is to use events. You might already heard about the even-driven systems, which became the de facto standard for creating loosely-coupled distributed systems. You can gather your application metrics, measurements or logs in an event-driven way (Event Sourcing, CDC, etc. ) and send them to an messaging backbone to be consumed by another resource like a database or an observability tool.

In this case, durability and performance is important and the traditional message brokers don’t have these by their nature.

Apache Kafka, on the contrary, is a durable, high-performance messaging system, which is also considered as a distributed stream processing platform. Kafka can be used for many use-cases such as messaging, data integration, log aggregation -and most importantly for this article- metrics.

When it comes to metrics, having only a message backbone or a broker is not enough. You have the real-time system but systems like Apache Kafka, even if they are durable enough to keep your data forever, are not designed to run queries for metrics or monitoring. However, systems such as InfluxDB, can do both for you.

InfluxDB is a database, but a time-series database. Leaving the detailed definition for a time-series database for later in this tutorial, a time-series database keeps the data in a time-stamped way, which is very suitable for metrics or events storage.

InfluxDB provides storage and time series data retrieval for the monitoring, application metrics, Internet of Things (IoT) sensor data and real-time analytics. It can be integrated with Apache Kafka to send or receive metric or event data for both processing and monitoring. You will read about these in more detail in the following parts of this tutorial.

In this tutorial, you will:

- Run InfluxDB in a containerized way by using Docker.

- Configure a bucket in InfluxDB.

- Run an Apache Kafka cluster in Docker containers by using Strimzi container images.

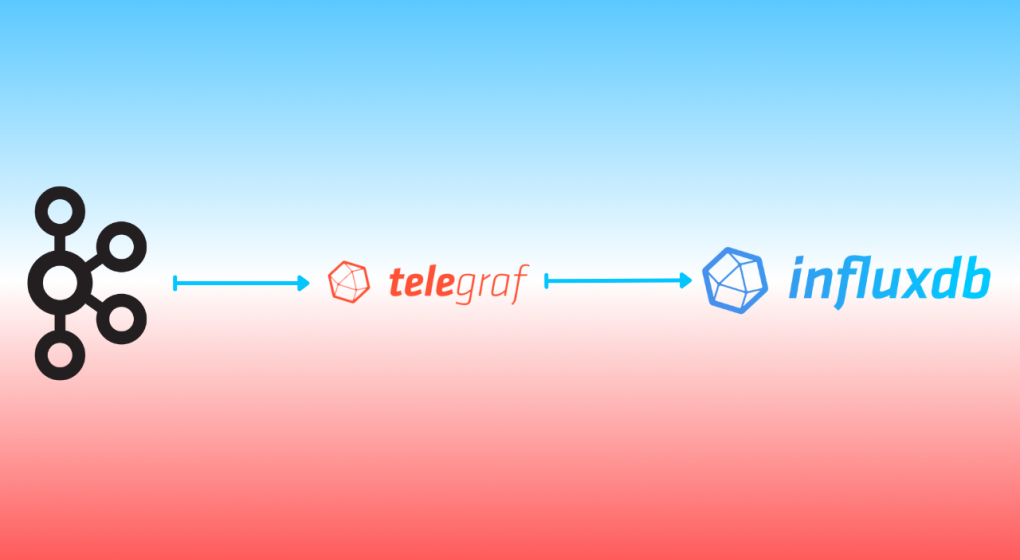

- Install Telegraf, which is a server agent for collecting and reporting metrics from many sources such as Kafka.

- Configure Telegraf to integrate with InfluxDB by using its output plugin.

- Configure Telegraf to integrate with Apache Kafka by using its input plugin.

- Run Telegraf by using the configuration created.

Click >>this link<< to read the related tutorial from the InfluxDB Blog.